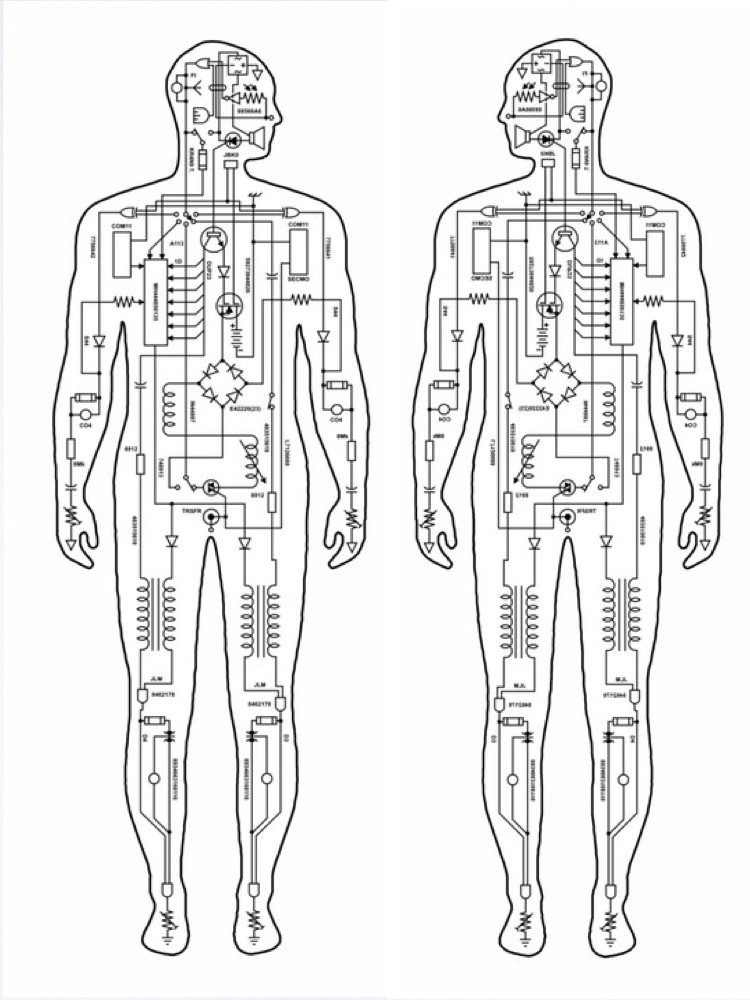

The development of computers and computer science in the 1940s activated a debate between humanists and mechanists over the possibility of intelligent machines. The prospect of thinking machines, or cyborgs, inspired at first religious indignation; intellectual disbelief; and large-scale suspicion of the social, economic, and military implications of an autonomous technology. In general terms, we can identify two major causes for concern produced by cybernetics. The first concern relates to the idea that computers may be taught to simulate human thought, and the second relates to the possibility that automated robots may be wired to replace humans in the workplace. The cybernetics debate, in fact, appears to follow the somewhat familiar class and gender lines of a mindbody split. Artificial intelligence, of course, threatens to reproduce the thinking subject, while the robot could conceivably be mass produced to form an automated workforce (robot in Czech means "worker"). However, if the former challenges the traditional intellectual prestige of a class of experts, the latter promises to displace the social privilege dependent upon stable categories of gender.

In our society, discourses are gendered, and the split between mind and body- as feminist theory has demonstrated- is a binary that identifies men with thought, intellect, and reason and women with body, emotion, and intuition. We might expect, then, that computer intelligence and robotics would enhance binary splits and emphasize the dominance of reason and logic over the irrational. However, because the blurred boundaries between mind and machine, body and machine, and human and nonhuman are the very legacy of cybernetics, automated machines, in fact, provide new ground upon which to argue that gender and its representations are technological productions. In a sense, cybernetics simultaneously maps out the terrain for both postmodern discussions of the subject in late capitalism and feminist debates about technology, postmodernism, and gender.

Although technophobia among women and as theorized by some feminists is understandable as a response to military and scientific abuses within a patriarchal system, the advent of intelligent machines necessarily changes the social relations between gender and science, sexuality and biology, feminism and the politics of artificiality. To illustrate productive and useful interactions between and across these categories, I take as central symbols the Apple computer logo, an apple with a bite taken from it, and the cyborg as theorized by Donna Haraway, a machine both female and intelligent.

We recognize the Apple computer symbol, I think, as a clever icon for the digitalization of the creation myth. Within this logo, sin and knowledge, the forbidden fruits of the garden of Eden, are interfaced with memory and information in a network of power. The bite now represents the byte of information within a processing memory. I attempt to provide a reading of the apple that disassociates it from the myth of genesis and suggests that such a myth no longer holds currency within our postmodern age of simulation. Inasmuch as the postmodern project radically questions the notion of origination and the nostalgia attendant upon it, a postmodern reading of the apple finds that the subject has always sinned, has never not bitten the apple. The female cyborg replaces Eve in this myth with a figure who severs once and for all the assumed connection between woman and nature upon which entire patriarchal structures rest. The female cyborg, furthermore, exploits a traditionally masculine fear of the deceptiveness of appearances and calls into question the boundaries of human, animal, and machine precisely where they are most vulnerable-at the site of the female body.

On the one hand, the apple and Eve represent an organic relation between God, nature, man, and woman; on the other, the apple and the female cyborg symbolize a mass cultural computer technology. However, the distance travelled from genesis to intelligence is not a line between two poles, not a diachronic shift from belief to skepticism, for technology within multinational capitalism involves systems organized around contradictions. Computer technology, for example, both generates a powerful mass culture and also serves to militarize power. Cultural critics in the computer age, those concerned with the social configurations of class, race, and gender, can thus no longer afford to position themselves simply for or against technology, for or against postmodernism. In order not merely to reproduce the traditional divide between humanists and mechanists, feminists and other cultural critics must rather begin to theorize their position in relation to a plurality of technologies and from a place already within postmodernism.

The work of one pioneer in computer intelligence suggests a way that the technology of intelligence may be interwoven with the technology of gender. Alan Turing (1912-1954) was an English mathematician whose computer technology explicitly challenged boundaries between disciplines and between minds, bodies, and machines. Turing had been fascinated with the idea of a machine capable of manipulating symbols since an early age. His biographer Andrew Hodges writes:

In dreaming of such a machine, Turing imagined a kind of autonomous potential for this electrical brain, the potential for the machine to think, reason, and even make errors. Although the idea of the computer occurred to many different people simultaneously, it was Alan Turing who tried to consider the scope and range of an artificial intelligence. Turings development of what he called a "universal machine," as a mathematical model of a kind of superbrain, brought into question the whole concept of mind and indeed made a strict correlation between mind and machine. Although Turing's research would not yield a prototype of a computer until years later, this early model founded computer research squarely on the analogy between human and machine and, furthermore, challenged the supposed autonomy and abstraction of pure mathematics. For example, G.H. Hardy claims that "the 'real' mathematics of the 'real' mathematicians, the mathematics of Fermat and Euler and Gauss and Abel and Riemann, is almost wholly 'useless.'. . . It is not possible to justify the life of any genuine professional mathematician on the ground of the utility of his work."2 This statement reveals a distinctly modernist investment in form over content and in the total objectivity of the scientific project unsullied by contact with the material world. Within a postmodern science, such claims for intellectual distance and abstraction are mediated, however, by the emergence of a mass culture technology. Technology for the masses, the prospect of a computer terminal in every home, encroaches upon the sacred ground of the experts and establishes technology as a relation between subjects and culture.

In a 1950 paper entitled "Computing Machinery and Intelligence," Alan Turing argued that a computer works according to the principle of imitation, but it may also be able to. learn. In determining artificial intelligence, Turing demanded what he called "fair play" for the computer. We must not expect, he suggested, that the computer will be infallible, nor will it always act rationally or logically; indeed, the machine's very fallibility is necessary to its definition as "intelligent."3T uring compared the electric brain of the computer to the brain of a child; he suggested that intelligence transpires out of the combination of "discipline and initiative." Both discipline and initiative in this model run interference across the brain and condition behavior. However, Turing claimed that in both the human and the electric mind, there is the possibility for random interference and that it is this element that is critical to intelligence. Interference, then, works both as an organizing force, one which orders random behaviors, and as a random interruption which returns the system to chaos: it must always do both. Turing created a test by which one might judge whether a computer could be considered intelligent. The Turing test demands that a human subject decide, based on replies given to her or his questions, whether she or he is communicating with a human or a machine

When the respondents fail to distinguish between human and machine responses, the computer may be considered intelligent. In an interesting twist, Turing illustrates the application of his test with what he calls "a sexual guessing game." In this game, a woman and a man sit in one room and an interrogator sits in another. The interrogator must determine the sexes of the two people based on their written replies to his questions. The man attempts to deceive the questioner, and the woman tries to convince him. Turing's point in introducing the sexual guessing game was to show that imitation makes even the most stable of distinctions (i.e., gender) unstable. By using the sexual guessing game as simply a control model, however, Turing does not stress the obvious connection between gender and computer intelligence: both are in fact imitative systems, and the boundaries between female and male, I argue, are as unclear and as unstable as the boundary between human and machine intelligence.